What is Docker, Containers, Dockerfile, and Docker Images?

A simple and easy-to-understand way to understand Docker no matter you're a beginner or and seasoned pro, this article is the simplest explanation!

Engineering Products through AI & Fullstack Skills! Heavily inspired by Silicon Valley, exploring and learning through GitHub, YouTube, Blogposts and Newsletters to increase my skillsets everyday be it Engineering, Entrepreneurship or Startup building. Aspire to work for a Silicon Valley company someday and readily finding my way through to it!

Docker

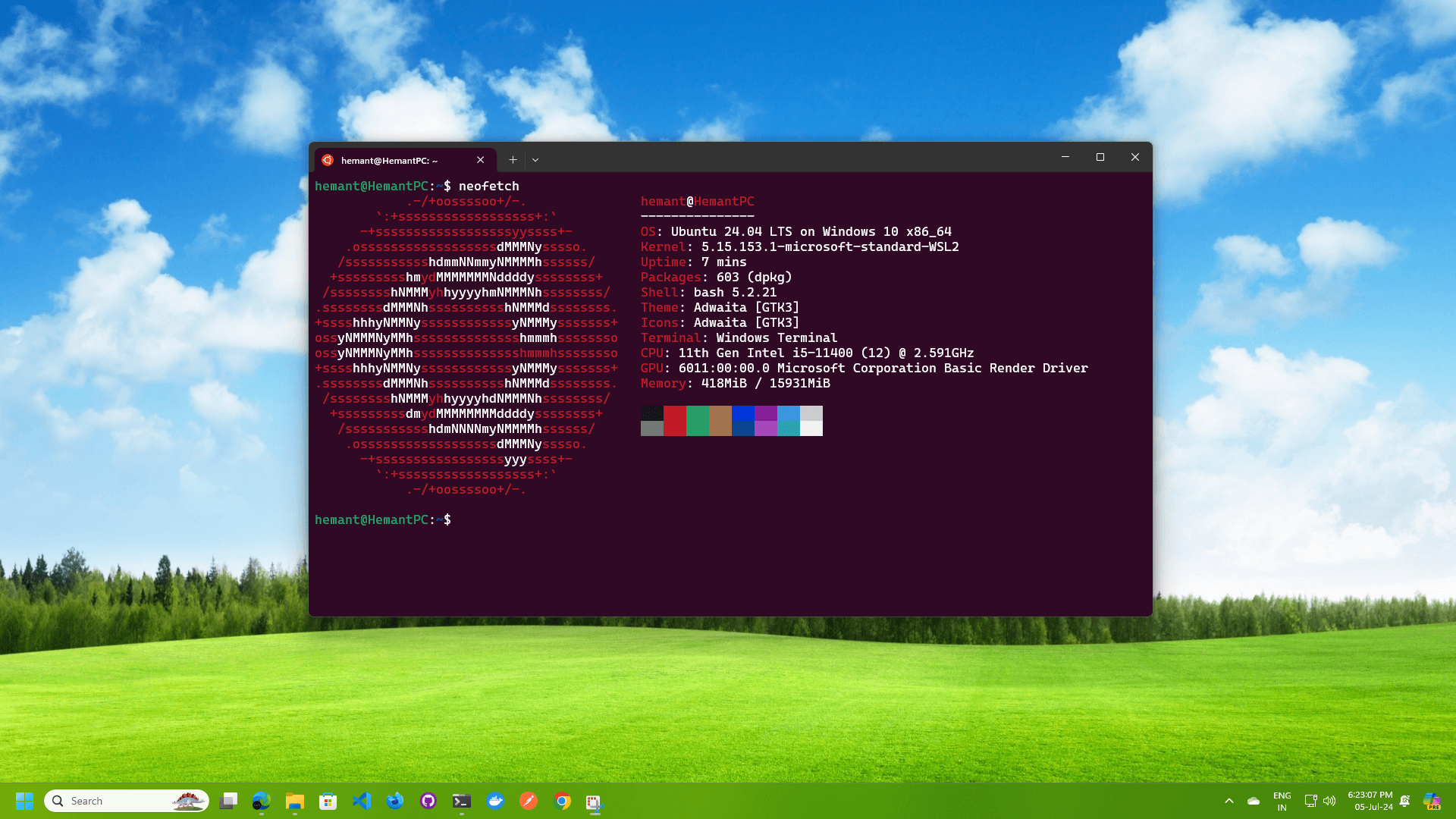

Docker is a containerization platform that allows developers to break-free from the problems such as - “It works on my machine“ by letting them developing, running and shipping applications seamlessly by containing the applications/code/project into a container providing it all the resources it ever will to run smoothly and work as expected.

Before we dive into Docker and it’s related technologies we first need to understand the main underlying concept behind Docker. Containers. Containers are the technology that built up the open platform known as Docker. It’s like Google took the Linux kernel and made Android OS.

Containers

So, what are containers anyway?

Well, Containers in general are nothing but isolated, lightweight, and self-sufficient packaged units that includes an application's code, runtime, libraries, and configuration files.

If I have to summarize a gist of what Containers are actually are, I believe this is the best analogy that works for me and might work for you as well

Containers are the “just-enough” file-system, environment, kernel or configurations that are required by your Software to run just how it is intended to run. If it needs a file-system to read local files for some reason, Containers include it the “just-enough” requirements.

Containers indeed are a really really great piece of engineering that allows you to isolate your applications withing the environment they need to operate and perform at it’s fullest capabilities.

Dockerfile

Now that we know well about what Containers are and how Docker provides you the ease of use while working with containerization technologies, let’s dive deeper into how you can weild this power that Docker has to provide you with.

Dockerfile, it is the spec-sheet of your container, you define all the requirements your application software requires in order to work just how it is intended to and perform at its best without any issues. In other terms, you can say that you want to build a PC and when you do it, you first want to decide upon all the specs you want in your PC like the processor, amount of memory and storage you would want out of your system, so Dockerfile is the file where you write it to Docker Engine all the requirements and setup you need in the container you would be putting your application in.

DockerfileFROM node:22-alpine AS base

FROM base AS deps

WORKDIR /app

COPY package.json ./

COPY index.js ./

RUN npm i -g pnpm

RUN pnpm install

EXPOSE 3000

CMD ["node", "index.js"]

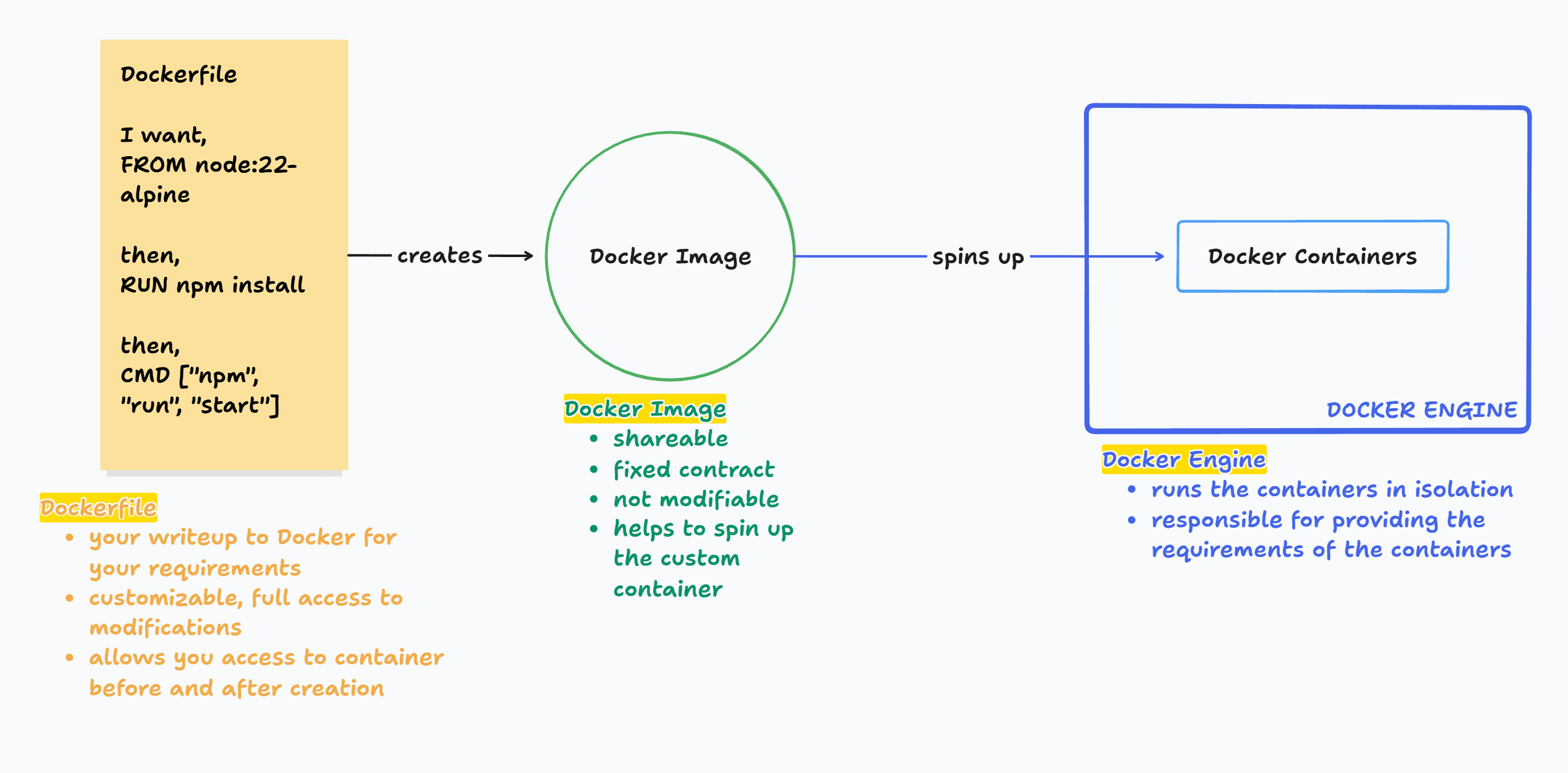

Docker Image

Now that you have successfully defined all your requirements and needs in the container, you are now ready to move ahead an build an image for your container.

Docker Image is created after all the requirements & configurations you have mentioned in the Dockerfile spec-sheet and build it using the command

docker build -t myapp .

-t stands for tagname for the Docker Image that you are building so that you can easily refer it in the list of Docker Images you might have in your Docker Desktop application or OrbStack if you prefer that.

The command above tells Docker to create a new Docker Image of you spec-sheet. It’s like a confirmation and contract between you and Docker, that now this Image is the final spec-sheet of your requirements as mentioned in the Dockerfile, like how developers use Git, you first add your changes to the staging area for any modifications and from thereon it is pushed as a commit after one extra step, here as well, Dockerfile let’s you have a customised spec-sheet, but the Docker Image that is built upon the provided Dockerfile.

Docker Images are shareable, you can share them by pushing them to the Docker Hub and share it with anyone for them to pull it from there. The reason why Docker Images are untouchable are so that it keeps and maintains the integrity and safety of your software application or whatever you might want to contain in the container through the Docker Image. If you shared the Dockerfile directly, it is easily prone to changes and modifications which in turn would break the integrity and whole purpose of your application software, so this is a feature of Containerization technology that prevents any infringement of your application software.

Docker Images are later run to spin up a container adhering to the configurations, requirements and features that are requested in the Docker Container.

Docker Container

You can spin up containers using the Docker Images that we created in the last step, and it is very very easy and straight up to do. The command below is what helps your spin up a container adhering and following the Docker Image of your custom specs and requirements. You can refer to these docs if you want to know more about the run command.

docker run -d myapp

-d in the command stands for running the container in detached mode. Detached mode allows the container to run independently in the background not hindering your terminal where you run it from and only return you the container id of the container you just spun up.

If you have a server in your app, you need to map the host system’s port with the isolated container’s system’s port, meaning you need to map the port of your system to the port of the container running in isolation to make sure that you can access the server running inside the container on your system.

docker run -d -p 3000:3000 myapp

# OR

docker run -dp 3000:3000 myapp

-p stands for port mapping in the said order HOST_PORT:CONTAINER_PORT, meaning, make the port CONTAINER_PORT running inside the container transmit all the data and everything it is working with to the HOST_PORT of the host’s system.

Mindmap - Visualizing everything

Let’s Visualize everything that we learnt so far. This is a simple and easy to understand diagram that I built.

Congratulations🎉 for making it till the end, I hope I was able to make you understand all the nitty-gritties of what Docker and Containerization technology is.

You can find me on

Twitter: hemantsharma.tech/x LinkedIn: hemantsharma.tech/linkedin GitHub: hemantsharma.tech/github Website: hemantsharma.tech